This is the question I get asked most often, usually by CTOs who have already lived through one expensive wrong answer. The real question here, however, isn't about code quality or tech debt metrics. It's about the ratio of accidental complexity to essential complexity in your current system.

If most of the complexity in your codebase exists because of the way it was built (not because of the problem it solves) you're paying a permanent tax that incremental modernization will only partially reduce. In those cases, a well-scoped re-engineering effort almost always has a lower 3-year total cost than a modernization program that never fully resolves the root architectural constraint.

What I've seen consistently: teams that try to modernize a fundamentally broken data model spend 18 months making it slightly less broken. Teams that re-engineer the data model as a standalone bounded context in month 1 spend 18 months building actual product features on top of it.

Ownership

Ownership Goal

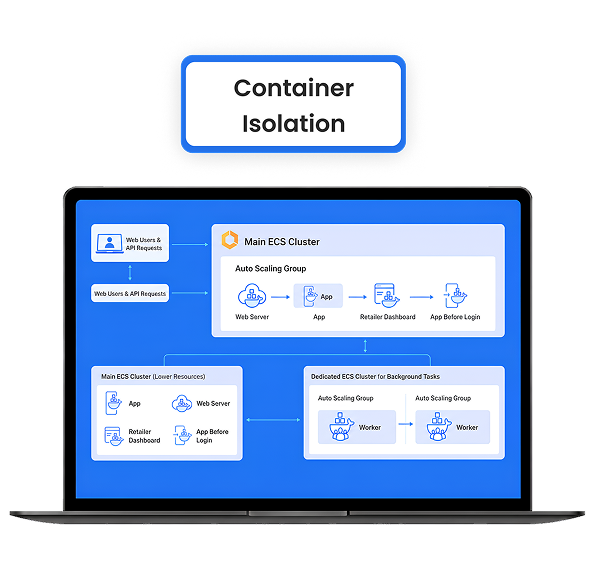

Goal Architecture

Architecture Scalability

Scalability Speed (Long-term)

Speed (Long-term) Change Handling

Change Handling Cost Impact

Cost Impact Product Control

Product Control Engineering Focus

Engineering Focus Business Outcome

Business Outcome