Read More

Outstanding IT Software at the 2026 TITAN Business Awards - Read More

ON THIS PAGE

- What is AI Development?

- AI Development vs. Traditional Software Development

- Why Businesses Invest in AI Development

- Key Components of AI Development

- The AI Development Process (Step by Step)

- Essential AI Development Tools and Tech Stack for 2026

- Common Challenges in AI Development and Solutions

- Industries and Use Cases Where AI Development is Making the Biggest Impact

- What is the Cost of AI Development?

- The Future of AI Development

- AI Development Best Practices and Checklists

- How Radixweb Can Help You with AI Development

ON THIS PAGE

- What is AI Development?

- AI Development vs. Traditional Software Development

- Why Businesses Invest in AI Development

- Key Components of AI Development

- The AI Development Process (Step by Step)

- Essential AI Development Tools and Tech Stack for 2026

- Common Challenges in AI Development and Solutions

- Industries and Use Cases Where AI Development is Making the Biggest Impact

- What is the Cost of AI Development?

- The Future of AI Development

- AI Development Best Practices and Checklists

- How Radixweb Can Help You with AI Development

Why You Should Read This Article: If you're tired of AI hype without delivery, this guide provides tried-and-tested insights on AI development – the step-by-step lifecycle, essential tools, top use cases, challenges, emerging trends, and more. It is written for leaders and teams who need clarity on how AI is built, deployed, governed, and scaled in real-world environments, with a focus on practical execution and outcomes.

Key Takeaways (TL;DR)● AI development is a lifecycle, not a feature build● AI systems differ fundamentally from traditional software.● Businesses invest in AI to drive specific outcomes.● The AI development process must be production-first.● The 2026 AI tech stack emphasizes operational maturity.● Common AI challenges are operational, not algorithmic.● AI is delivering the most impact in data-rich industries.● AI development costs extend beyond initial builds.● Future AI development favors responsible, scalable systems.● Following best practices reduces risk significantly.● Radixweb supports AI development end to end.

AI development is the end-to-end process of transforming raw ideas into production-ready intelligent systems. It includes multiple stages, starting from identifying high-impact problem areas (e.g., boosting operational efficiency) to deploying working models, monitoring for drift, and iterating with 2026 trends such as AI agents and edge computing.

In 2026, Artificial intelligence is predicted to be a $500 billion-invested market, with approximately 80% of enterprises adopting AI to automate, innovate, and invent everything that wasn’t possible before.

Despite that, an astonish 70% of AI projects fail. Reasons include poor planning, data pitfalls, or scaling bottlenecks, and it has costed businesses millions. As the path from idea to working system is rarely straightforward, it helps to have a clear map of this process before you commit budget or spin up a new AI initiative.

Our AI development guide starts with the foundations, i.e., what it is, how it works, the main types, and the key components, then moves into the practical details like step‑by‑step development stages, tools and frameworks, and the programming languages you’re most likely to use.

From there, we’ll zoom out to where Artificial Intelligence is making the biggest impact across industries, the highest‑value use cases, and what all of this means for costs, benefits, challenges, and future trends.

What is AI Development?

AI development refers to the complete process of designing, building, deploying, and operating systems that can perform tasks requiring human intelligence and judgment. These systems learn from data to automate workflows or generate outputs and deliver tangible business outcomes, such as cost savings or efficiency gains.

In practice, the end-to-end AI development process includes ideation, data handling, model building, deployment, and monitoring. It’s an ongoing cycle customized to real-world factors like budgets and regulations.

AI Development vs. Traditional Software Development

Traditional software works with predefined logic, i.e., if-then rules. But AI infers from data, which makes it adaptive but also unpredictable. AI Models can hit 92% accuracy in one month, drop to 80% the next due to new trends.

Additionally, AI demands data pipelines and retraining loops, while traditional software needs only code updates. Upfront Artificial Intelligence development costs are 2-3x higher (data labeling) than generic software development, but ROI kicks in faster for repetitive tasks.

| Aspect | AI Development | Traditional Software Development |

|---|---|---|

| Core Input | Data volumes (GBs-TBs) | Specs and user stories |

| Output Nature | Probabilistic (e.g., 87% confidence) | Deterministic (always exact) |

| Iteration Cycle | Weekly (retrain on drift) | Monthly releases |

| Risk Factors | Bias, overfitting, drift | Bugs, scalability limits |

| Team Skills | Data scientists + developers | Developers + QA |

Why Businesses Invest in AI Development

Organizations invest in well-designed AI systems to solve specific operational and decision-making challenges that rule-based systems alone cannot address efficiently. Enterprise AI development helps teams turn messy, underused data into decisions and predictions that directly move KPIs like revenue, churn, and operational efficiency.

With data complexity growing, businesses are increasingly focused on scaling artificial intelligence across business operations to generate insights and improve performance across departments.

Here we've jotted down the most visible and expected benefits of AI development:

Direct Business Value and ROI

AI projects that are scoped properly can deliver measurable financial returns. Companies use AI-driven process automation for enterprise workflows to automate repetitive tasks, reduce manual effort, and improve accuracy, all of which lead to expected ROI.

Over time, AI-enabled workflows compound value, as every new data point improves models, which continuously refine decisions and outcomes.

Cost Reduction and Operational Efficiency

A major reason for AI investment is the ability to automate repetitive, high-volume processes, since tasks like document review, support ticket triage, claims processing, and basic analytics can be handled by models at scale, 24/7, with consistent quality. Apart from cutting labor costs, it reduces errors, rework, and bottlenecks that slow down operations, even in tightly regulated industries like healthcare and fintech.

Better Decisions with Data-Driven Insights

Businesses sit on huge datasets that traditional reporting barely scratches. However, AI systems can detect patterns and correlations that humans miss. This enables proactive decisions, such as preventing a fraudulent transaction or adjusting pricing based on predicted demand.

Enterprises invest in predictive analytics for data-driven decision making and to generate evidence-based strategies at both operational and strategic levels.

Competitive Advantage and Customer Experience

AI is increasingly a differentiator in crowded markets. Personalized recommendations, intelligent chatbots, adaptive pricing, and real-time risk scoring all create better, stickier CX. If your competitors are using AI, standing still becomes a real risk.

Investing in building AI-powered software lets companies ship intelligent features, respond to market changes faster, and position themselves as technologically mature partners, something that’s crucial in enterprise deals.

Scalability and Innovation at Speed

Traditional software products tend to break down with volume spikes or new use cases. AI systems designed to scale from prototype to production, once in place, can scale to millions of events, transactions, or queries with relatively small marginal cost. This scalability makes it possible to launch new data-powered features, like anomaly detection or content generation, without redesigning everything from scratch.

Key Components of AI Development

AI development hinges on a handful of interconnected components that form a reliable pipeline from raw ideas to production-ready systems. These are the practical building blocks; skipping any one area often hinders the overall effectiveness of the solution, regardless of how strong the model appears in isolation.

Core Technical Components

AI system development is built on five components - data, models, infrastructure, processes, and monitoring. Data is the fuel, models are the engines, infrastructure handles compute, while processes orchestrate the lifecycle, and monitoring keeps everything performant post-launch.

1. Data Pipeline: The Foundation (60-80% of Effort)

No AI works without robust data handling. AI engineers managing data pipelines and model training start with collection from APIs/databases, then clean (remove duplicates, handle missing values), label, and augment (synthetic data for privacy). Poor data quality tanks accuracy by 20-40%.

2. Model Development: Algorithms and Training

Teams select models based on real-world AI use cases across industries, for example, regression for forecasting and CNNs for vision. Models are trained on split datasets (80/20 train/test), tuned with hyperparameters, and validated with metrics like F1-score.

3. Infrastructure and MLOps Stack

Artificial Intelligent models must be packaged, deployed, and integrated into existing software or workflows. This includes model serving, API design, scalability planning, and failure handling. Decisions made here often determine long-term operational stability and cost efficiency.

4. Integration, Ethics, and Monitoring Layers

For performance drift, data changes, and unexpected behavior, AI models are continuously observed in production. With data governance frameworks for AI systems, you ensure compliance, security, and responsible use. Continuous improvement processes allow models to adapt as conditions evolve.

The AI Development Process (Step by Step)

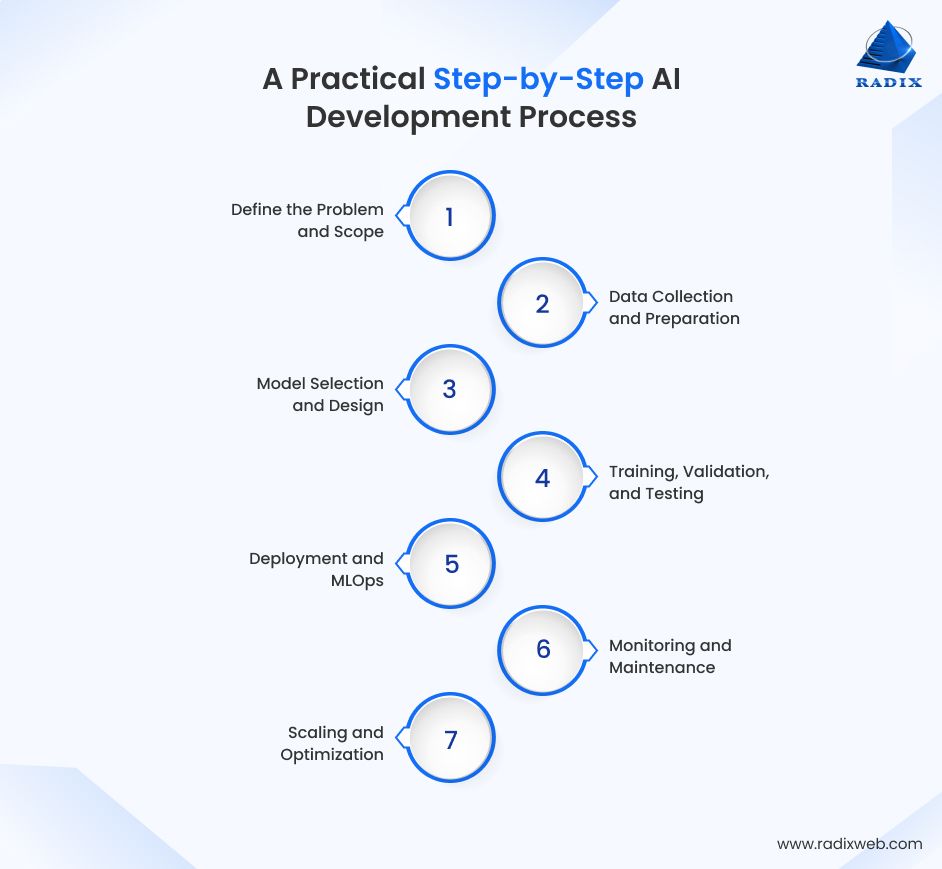

Building AI is an iterative cycle that teams repeat to refine models and adapt to changes. The following seven stages of AI development, updated for 2026 demands like agentic AI and edge computing, explains more than standard guides by including enterprise tools, code examples, and pitfalls to avoid.

Step 1: Define the Problem and Scope

The first phase in the AI development lifecycle is to establish a solvable, business-aligned problem. Instead of "build AI for everything," you should pinpoint a concrete target or KPI like "reduce processing time by 25%" or "boost accuracy to 90%."

To get there, conduct stakeholder workshops to map requirements - what data exists? What constraints could be there around budget, timelines?

You can use OKRs to structure this thinking, for example: Objective – automate fraud detection; Key Result – reduce false positives by 30% in six months.

Tools to Use

- Miro for ideation

- Jira for tickets

Common Pitfall

- Scope creep - lock features early.

- Document assumptions (e.g., data availability) to justify ROI upfront.

The critical outcome of this step is a well-bounded scope, which typically runs on a 4–6 week horizon. You might feel spending 10–20% of your total effort on scoping is front‑loaded, but it prevents far more expensive rework later.

Step 2: Data Collection and Preparation

The next step is to collect and prepare the data that will power your model. Data work consumes 60–80% of the total effort, since models are only as good as the data they learn from. You can pull data from internal databases, CSV exports, data warehouses, public sources, or synthetic data generators if you need to protect sensitive information.

After collection, you clean the data. Tasks here include:

- Removing duplicates

- Handling missing values

- Normalizing numeric ranges

- Encoding categorical variables

You should also audit the data. The pipeline must comply with regulations such as GDPR or HIPAA where relevant.

In practice, you will split your dataset into training, validation, and test sets, most often in an 80/10/10 or similar ratio. Good data preparation dramatically reduces the risk of discovering quality issues late in the project, where they are more expensive to fix.

Step 3: Model Selection and Design

In the third stage of AI development, you choose and design the model that will best address your problem. Model selection has to match the nature of your task and data to appropriate algorithms. For example, you can use CNNs for image‑based tasks and transformer models for language or generative tasks.

It is usually wise to start with a few simple baseline models before moving to more complex deep learning architectures. You can also use transfer learning to fine‑tune pre‑trained models and significantly reduce training time and data requirements.

The table below shows a practical mapping between common algorithms, their best‑fit use cases, and typical frameworks:

| Algorithm | Best For | Framework |

|---|---|---|

| Regression | Forecasting | PyTorch |

| CNN | Computer Vision | Keras |

| Transformer | NLP and GenAI | Hugging Face stack |

Step 4: Training, Validation, and Testing

With a candidate model in place, you move into the training, validation, and testing phase, where you train your model on the training dataset, tune it with the validation set, and finally evaluate it on the test set to estimate how it will perform in real enterprise scenarios.

A common practice is to use an 80/20 split between training and testing, sometimes with an additional validation subset created from the training data. You will select evaluation metrics that fit your use case.

During training, you monitor both the training and validation metrics to detect overfitting or underfitting. The result of this step is a model that performs well on historical data and is very likely to generalize to new, unseen data.

Step 5: Deployment and MLOps

If the model meets your performance thresholds, you prepare it for deployment. In modern setups mostly, this involves packaging the model as a service, exposing it through an API, and deploying it using containers.

Some useful frameworks to use in this phase are:

- FastAPI or Flask to build the serving layer

- Docker to containerize the service

- Kubernetes to manage scaling and availability

MLOps practices then tie the whole Artificial Intelligence development lifecycle together. You track experiments and model versions, manage configuration, automate deployment pipelines, and integrate with logging and monitoring tools. At the end of this step, your model is live and reachable by your apps, dashboards, or external clients.

Step 6: Monitoring and Maintenance

AI development shifts into a continuous monitoring and maintenance phase after deployment.

The model’s performance can degrade over time due to changes in user behavior, market conditions, or data distributions. You therefore monitor metrics such as accuracy, latency, error rates, and input data drift. When you detect significant drops in performance or shifts in data patterns, you plan retraining or adjust your model and features.

Good monitoring includes both technical and business metrics. On the technical side, you watch for issues like memory usage, throughput, and error codes. On the business side, you track KPIs such as conversion rate, fraud detection rate, or claim approval time.

Step 7: Scaling and Optimization

The final step of the AI development roadmap is about scaling and optimizing the AI system so it can handle larger volumes, more use cases, and stricter constraints.

Scaling can mean:

- Serving more requests per second

- Supporting additional geographies

- Adding new product features

You can optimize inference speed and adopt distributed training and serving strategies when your workloads grow beyond a single machine.

In parallel, you may introduce approaches like federated learning to train models across multiple data sources without centralizing sensitive data. At this point, your AI initiative evolves from a single project into a reusable platform that supports ongoing innovation across your organization.

Essential AI Development Tools and Tech Stack for 2026

In 2026, an effective AI development tech stack includes tools for data engineering, model development, deployment and MLOps, monitoring, and governance.

Programming Languages and Frameworks

Python remains the undisputed leader among the most widely used programming languages for AI development, It accounts for 95% of AI work due to its extensive ecosystem.

Core Python-based AI development frameworks include:

- PyTorch (2.5+) for prototyping, R&D, and dynamic graphs

- TensorFlow/Keras for stable mobile and enterprise deployments

- Hugging Face Transformers leads NLP/GenAI fine-tuning with 1M+ pre-trained models

Cloud Platforms and MLOps

Cloud handles compute, storage, and collab. Azure Machine Learning, AWS SageMaker, and Google Vertex AI are the top three cloud platforms available today for AI development.

| Platform | Key Feature | Enterprise Fit |

|---|---|---|

| Azure ML | Compliance, MLflow native | Regulated industries |

| SageMaker | Built-in pipelines | High-scale training |

| Vertex AI | Multimodal AutoML | Quick MVPs |

MLOps tools like MLflow (tracking), Kubeflow (orchestration), and Weights & Biases (collaboration) automate reproducibility across machine learning pipelines. They also support operationalizing machine learning models with MLOps in production environments..

Data Management and Annotation Tools

Data management tools handle collection, cleaning, versioning, and labeling. Using these tools is critical since data prep consumes 60-80% of AI project time.

- Pandas/Dask: Processes and cleans datasets from GBs to TBs with parallel computing for enterprise-scale ETL workflows.

- Apache Spark: Manages petabyte-scale data processing with SQL integration for distributed feature engineering.

- LabelStudio: Open-source platform for team-based data annotation supporting images, text, and video labeling.

Deployment, Serving, and Monitoring

Deployment packages models as scalable APIs and serving handles inference at production scale. Monitoring tracks drift, latency, and accuracy to prevent failures post-launch.

- Docker/Kubernetes: Containerizes models for portable, auto-scaling deployment across cloud and on-premises environments.

- BentoML: Packages ML models as optimized APIs with A/B testing and multi-model serving capabilities.

- Prometheus/Grafana: Monitors model metrics, data drift, and infrastructure health with real-time dashboards.

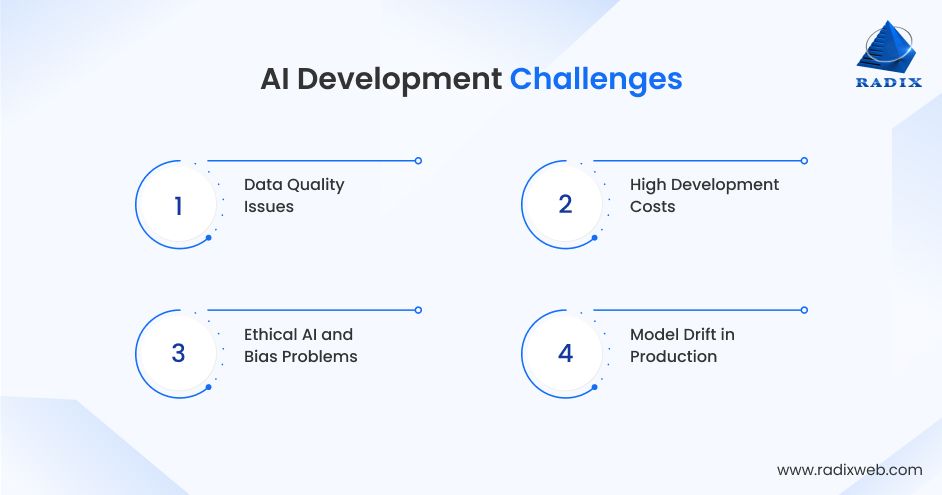

Common Challenges in AI Development and Solutions

AI projects face predictable hurdles like poor data quality, data drift, high cost of development, and ethical concerns. These pitfalls cause 70% of initiatives to derail, but each has practical fixes.

Data Quality Issues

Raw datasets come with duplicates, missing values, imbalanced classes, and measurement errors that destroy model performance. A single corrupted feature can drop accuracy by 20-40%, which means rework late in projects.

Solution - Deploy systematic ETL pipelines using Pandas or Dask for cleaning, validation rules, and schema enforcement. Generate synthetic data via tools like Gretel.ai to augment scarce categories. Regular quality audits catch 80% of issues before training begins.

High Development Costs

Training large models from scratch consumes massive GPU hours. For example, a 7B parameter LLM can cost $10K+ in cloud compute alone.

Solution - Parameter-Efficient Fine-Tuning (PEFT) methods like LoRA reduce trainable parameters by 10,000x while preserving 95% performance.

Ethical AI and Bias Problems

AI models can amplify dataset biases and create unfair outcomes. On top of this, unexplained "black box" predictions fail regulatory audits, whereas protected class disparities above 5% trigger compliance violations and reputational damage.

Solution - Apply SHAP/LIME for per-prediction explainability. Use Fairlearn metrics to enforce demographic parity and NIST AI Risk Management Framework guidelines with monthly bias audits to maintain compliance and auditability.

Model Drift in Production

Deployed models degrade as real-world data distributions shift due to seasonality, policy changes, or competitor actions. Unmonitored systems lose 10-20% accuracy quarterly.

Solution – Teams implement WhyLabs/Prometheus monitoring for input drift detection. Never run production models over 90 days without validation.

Industries and Use Cases Where AI Development is Making the Biggest Impact

AI delivers outsized ROI where high-volume data can be used for repetitive decision-making. For this reason, healthcare (78% adoption), finance (71%)), and manufacturing (50%) lead the 2026 rankings. These sectors see 3-4x returns with automation, prediction, and personalization.

| Industry | Adoption Rate | CAGR | Primary Driver |

|---|---|---|---|

| Healthcare | 78% | 36.8% | Clinical decision support |

| Finance | 71% | 19.6% | Fraud detection and risk models |

| Technology | 83% | 27% | Code generation and product features |

| Manufacturing | 77% | 18% | Predictive maintenance |

| Retail | 77% | 21% | Demand forecasting and personalization |

We’ve also covered these industries in detail. Continue reading our dedicated articles on:

High-Value Use Cases

Predictive maintenance dominates manufacturing applications, where sensor data and AI models cut equipment downtime by 50%. Siemens reports millions in annual savings through these systems.

Financial institutions deploy real-time fraud detection that delivers greater ROI by preventing unauthorized transactions before they clear. Healthcare organizations use diagnostic imaging AI, such as DeepMind's systems, that achieve over 90% accuracy.

Retail giants like Amazon and Walmart increase conversion rates by 35% through personalized product recommendations powered by customer behavior analysis.

Logistics companies such as UPS optimize delivery routes with AI, reducing fuel consumption across their fleets.

Generative AI in 2026 has emerged as the fastest-growing category, particularly for document automation in legal departments and precision agriculture applications that deliver 23% annual growth rates.

What is the Cost of AI Development?

AI development costs start from $20,000 for basic prototypes to $1M+ for enterprise platforms, depending on scope, complexity, and data needs.

Unlike traditional software, AI costs are not concentrated only in the build phase. They extend with data preparation, infrastructure, deployment, and ongoing maintenance.

Cost Breakdown

- Data Prep: 30-40%

- Model Development: 25-35%

- Infrastructure: 15-20%

- Team/Integration:

- Maintenance: 10-15%

For pilots and MVPs, costs may range from tens of thousands of dollars. Production-grade systems for core business functions usually need a substantially larger enterprise AI development investment, especially with scaling usage. In enterprise environments, long-term operational costs are more likely to exceed initial development costs.

The Future of AI Development

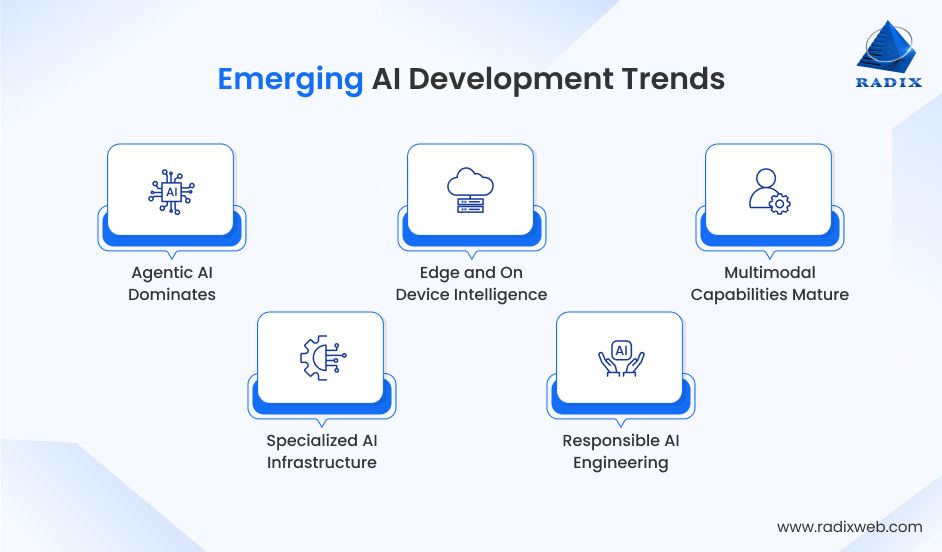

In 2026 and the upcoming years, Artificial Intelligence has substantially shifted from experimentation and PoCs to enterprise-scale execution. Top AI development trends include agentic systems, efficient infrastructure, and multimodal capabilities. Leaders prioritize measurable ROI over hype, with 83% of enterprises deploying production AI.

1. Agentic AI Dominates

AI agents are now autonomous decision-makers, capable of handling multi-step workflows. "Super agents" orchestrate tasks like email drafting, code deployment, and data analysis without constant supervision.

2. Edge and On-Device Intelligence

Smaller language models (SLMs) run directly on phones, factory equipment, and IoT devices, eliminating cloud latency and costs. Privacy improves as sensitive data never leaves devices. Dynamic edge-to-cloud routing has become standard across industries.

3. Multimodal Capabilities Mature

AI models can now process text, images, video, and audio natively for richer enterprise applications. Physical AI powers warehouse robots and surgical assistance, with Generative models tackling complex problems like drug discovery beyond chat interfaces.

4. Specialized AI Infrastructure

Custom ASICs and chiplet architectures replace generic GPUs for 50% training cost reductions. Global "superfactories" are now delivering dense compute capacity and open-source governance frameworks ensure enterprise-grade reliability and auditability.

5. Responsible AI Engineering

Permissions, audit trails, and reliability metrics have become mandatory for production systems. GraphRAG architectures ground outputs in enterprise data sources, which is why hallucination rates drop 70% through systematic validation pipelines, an approach detailed in Microsoft's GraphRAG research.

AI Development Best Practices and Checklists

Here are some proven practices to build AI systems that deliver sustained business value:

Planning and Scoping

- Define measurable KPIs upfront (25% efficiency gain, 90% accuracy)

- Document data availability, compliance needs, and success criteria

- Create a 4-6 week MVP roadmap with clear Go/No-Go gates

Data Management

- Allocate 60-80% budget/time for data quality

- Use synthetic data generators for privacy-sensitive domains

- Split data 80/10/10; validate subsets before full training

Development Workflow

- Prototype 3 baseline models before complex architectures

- Track experiments systematically

- Use transfer learning/LoRA to cut training costs 70%

Deployment and Operations

- Containerize with Docker, deploy via Kubernetes/MLflow

- Monitor drift weekly and retrain on 5% accuracy drops

- A/B test new versions with rollback capability being mandatory

Governance Checklist

- Bias audits: <5% demographic disparity across classes

- Compliance: GDPR/HIPAA data flows validated

- Quarterly third-party model reviews for production systems

How Radixweb Can Help You with AI DevelopmentOur work with Artificial Intelligence I did not begin with the recent surge in large language models or generative tools. We started nearly two decades ago, when AI initiatives were grounded in statistical models, rule-based systems, and early machine learning applied to forecasting, classification, and decision support.That foundation still shapes how we build AI today. What has changed is the breadth and depth of what is now possible. As AI adoption accelerated across industries, we expanded our team, strengthened our research capabilities, and invested heavily in modern AI engineering, MLOps, and generative architectures to help organizations shape their enterprise AI adoption strategy.Over the years, we have delivered production-grade systems such as a meeting intelligence platform, a legal document search solution, and a GPT-powered recruitment chatbot, among many other. Businesses evaluating which AI development company is right for their needs can explore how Radixweb compares to other leading providers.If you are evaluating AI as a strategic capability rather than a short-term experiment, our AI development services are designed to support that journey end to end, from problem definition to production and scale.Explore our expertise or talk to our team to assess how AI can be applied responsibly and effectively within your organization.

Frequently Asked Questions

What are the main stages of the AI development lifecycle?

How is AI development different from traditional software development?

What technologies and tools are used in AI development today?

What programming languages are best for AI development in 2026?

What industries benefit most from AI in 2026?

What are the main benefits of AI development for businesses?

What challenges do developers face in AI projects?

Ready to brush up on something new? We've got more to read right this way.