Read More

Outstanding IT Software at the 2026 TITAN Business Awards - Read More

ON THIS PAGE

- Why Business Struggle Choosing between Fine tuning vs Rag

- What is RAG in Artificial Intelligence – How it Works, Benefits, Challenges

- What is Fine Tuning – How It Works, Advantages and Disadvantages of Fine Tuning

- Difference between RAG and Fine Tuning You Need to Know

- Hybrid vs RAG vs Fine-Tuning Use Cases

- What Should You Choose for Your Business?

ON THIS PAGE

- Why Business Struggle Choosing between Fine tuning vs Rag

- What is RAG in Artificial Intelligence – How it Works, Benefits, Challenges

- What is Fine Tuning – How It Works, Advantages and Disadvantages of Fine Tuning

- Difference between RAG and Fine Tuning You Need to Know

- Hybrid vs RAG vs Fine-Tuning Use Cases

- What Should You Choose for Your Business?

10 Mins Read: RAG vs fine-tuning is a debate about how architectural decisions drive cost benefits and scalability and de-escalate risk in Gen AI. RAG connects GenAI solutions to governed knowledge for keeping outputs relevant and citable. Fine-tuning, on the other hand, refines the model behaviour and reasoning for domain-specific outcomes. This piece is your decision book for choosing between RAG and fine-tuning or a hybrid approach. Read on!

TL; DR Summary

● Fine-tuning solves model behaviour issues; RAG solves knowledge gaps. RAG delivers fresh, citable, compliant outputs; fine-tuning generates consistent, task-specific outcomes.● RAG has lower cost-to-start, is easy to govern. Fine-tuning has high upfront investments, delivers latency and precision advantages.● RAG uses grounding to reduce hallucinations – the biggest risk is retrieval quality; data chunking, cleanliness and indexing need to be prioritized.● Fine-tuning enhances brand voice and policy adherence – it integrates the risk of errors in model weight with steadily evolving knowledge.● Most enterprise-scale Gen AI products of 2026 are predicted to use hybrid stacks – RAG for dynamic knowledge and fine-tuning for predictable outcomes.● Fine-tuning vs RAG vs Hybrid stack – make your pick depending on architecture for cost, scale, compliance and domain-aligned needs.

Operationalizing Gen AI for enterprises is a lot about reliability – are your models generating consistent and factually correct outputs? Are your own teams trusting the AI outcomes to streamline operations and adopt decisions?

There’s an inherent paradox in Gen AI models – no matter how brilliant a model is, it can generate outputs from outdated facts and fail to follow your core governance policies. Retrieval Augmented Generation vs Fine-Tuning is essentially an architectural decision in the context of development – a mission-critical one that makes your generative AI models look good in prototypes but a failure in demos. Or it makes it a highly scalable and compliant revenue growth engine.

Why Enterprises Struggle to Choose - RAG vs Fine Tuning?

Tech is a rapidly changing industry where tooling evolves in days. However, the impact of tooling evolution – latency, TCO, hallucinations, governance, and risks – lives in the system longer. Also, different teams in your system prefer different variables – you aren’t just navigating the quicker time-to-launch for marketing teams and stability requirements of tech architects; each team is prioritizing its own goal:

- IT teams prioritize security

- Data teams want reproducibility

- Product teams run after time-to-value

The larger industry guidance hinges on using RAG for knowledge, fine-tuning for behaviour and a hybrid approach for high-stake environments. But what works truly for your business? We have our enterprise lens on – in this article we’ll cut down all the noise.

We won’t just break down how fine-tuning and RAG differ, where you can leverage hybrid architectures and what each of them enables for true value in Gen AI development. We’ll also help you review each of the following angles:

- Cost & TCO: Upfront vs ongoing cost, infrastructural patterns, people expenditures

- Risk: Hallucinations, compliance issues, frictionless auditability

- Scale: Latency/throughput, update cadence, portability, vendor lock‑in

A report by Grandview Research mentions that the global enterprise Gen AI market is predicted to grow from USD 2.94 billion in 2024 to USD 19.8 billion by 2030, at an astounding CAGR of 38.4%. The urgency to decide on the right framework is eminent. So, by the end of this read, we aim to have a decision-ready framework that helps you cut through hype and align your generative AI strategy with realistic business goals.

What Is RAG in GenAI?

Retrieval Augmented Generation, or RAG, is an architectural form that derives relevant enterprise information during a query time and integrates it into model prompts without changing model weights so that the outputs are fresh, citable, and grounded.

How Does RAG Work in High-Level Workflows?

Most enterprise-scale businesses prefer it because RAG architectures deliver data freshness without requiring retraining, traceability and citations for transparent auditing, grounding to avoid hallucinations, and more stable governance as private enterprise data stays away from model wrights. A simp diagram would look like this:

Retriever (semantic + hybrid search), Vector/Store (for embeddings), LLM (generator), + Pipelines for ingestion, metadata, and security controls.

- Ingesting & indexing data (chunking & embedding) into vector databases

- At the interface layer, the retriever discovers the most relevant chunk of content while the optional re-ranker refines them.

- LLMs use this retrieved context (with citations) to generate the answer for the query.

To understand the true leverage of this architecture, you need to know in detail about the advantages and disadvantages of RAG architecture.

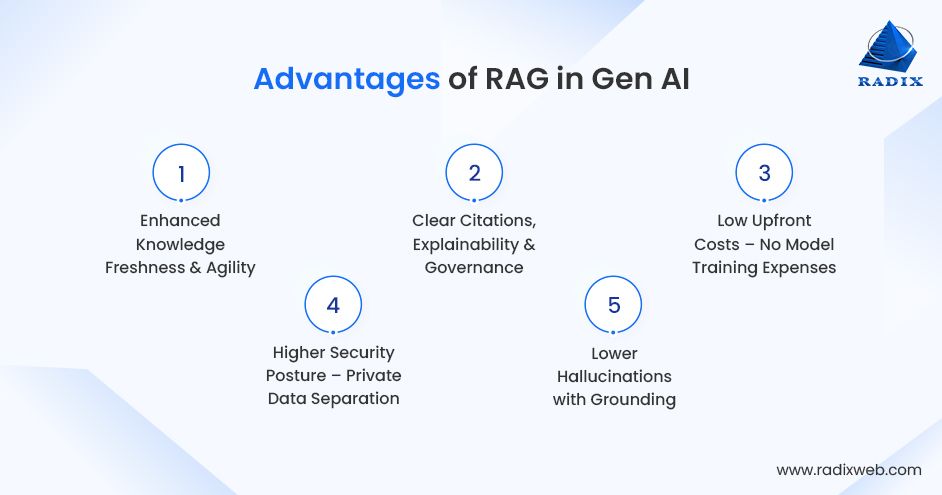

Benefits of RAG for Enterprise Generative AI

Businesses that have implemented RAG architectures for Gen AI models have experienced knowledge freshness, clear citations, and reduced hallucinations. Let’s take a quick look on the benefits of RAG:

Knowledge Freshness & Agility: Update or revoke knowledge simply by re-indexing documents —no MLOps cycles, no retraining, no downtimes. It’s best for dynamically changing environments where price updates, product catalogs, legal documents, and compliance policies change very often.

Citations & Governance: Improve explainability in generative AI outcomes where every output comes with verifiable source snippets. Strengthening auditability, reducing compliance risks, and building user trust becomes a breeze for regulated industries like legal, fintech and healthcare.

Lower Upfront Cost: Avoid expensive model retraining costs. Pipelines, embeddings and retrieval infrastructures make initial deployments cheap and fast. RAG helps you avoid GPU-heavy fine-tuning, which makes budget forecasting simpler and more accurate.

Enhanced Security Posture: RAG enables tighter control on highly sensitive content by keeping enterprise data out of model weights.. It also helps enforce strict access control, row‑level permissions, redaction, and data residency rules right into the retrieval layer without modifying the components of LLM development.

Reduced Hallucinations: RAG helps control hallucinations in Gen AI models by grounding responses with internal documents to reduce factual drift. You make high-stake decisioning safer with verified enterprise sources.

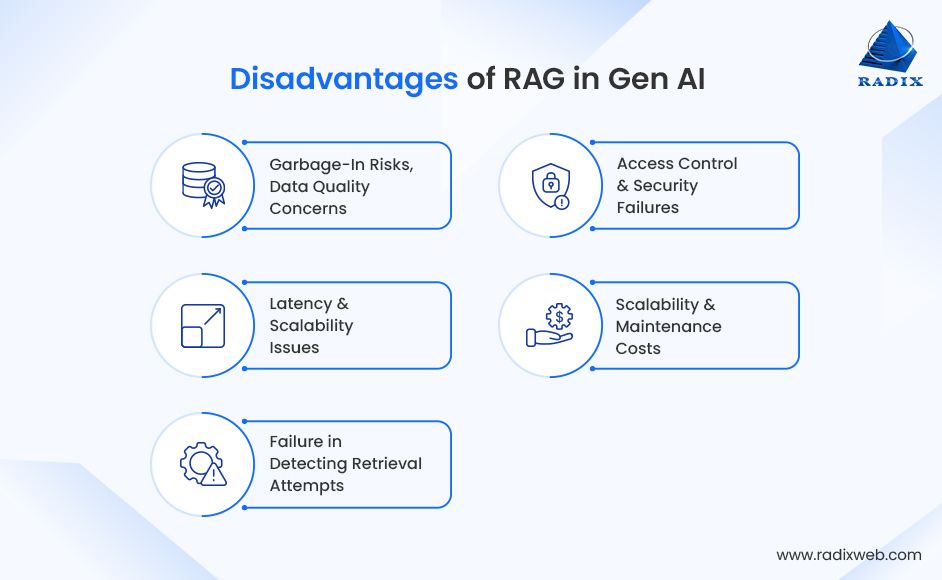

Challenges of RAG in Real‑World Generative AI Systems

While RAG architectures bring in several business benefits for Gen AI models, businesses also face considerable roadblocks in implementing them:

Data Quality & “Garbage‑in” Risks: Indexing is the hero task for RAG. Retrieval precision is often impacted by flawed chunking, outdated files, noisy documents, and conflicting metadata. This is where semantic chunking, ingestion governance, and data cleaning becomes a necessity for RAG systems.

Latency & Scalability Challenges: Retrieval pipelines can add overheads of latency with vector searches, re-ranking, and multi-hop querying. If teams have to maintain enterprise SLAs, they have to optimize caching layers, async retrievals, hybrid searches, and efficient index architectures.

Retrieval Failures: Common retrieval failures in RAG models include semantic near-misses, low recalls, and terminology mismatches. Enterprises can monitor retrieval hit rates, leveraging re-rankers and adding hybrid lexical + vector search to bridge these gaps.

Security & Access Control: RAG models directly adopt the security posture of your business. From document-level ACLs, PII rules, and sensitivity labels, security must be embedded into every layer of indexing and retrieval.

Complex Maintenance Cost: To maintain accuracy and retrieval consistency, RAG systems need embedding refreshes, content archival, indexing optimizations, and metadata enrichment. These add to the long-term lifecycle management costs.

What Is Fine‑Tuning in Gen AI?

Fine-tuning aligns the tone, behaviour and task-specific reasoning of generative AI models as per your domain using curated examples from a pre-trained model’s weight.

It leverages SFT input-output pairs/ DPO or RLHF preference signals and trains new checkpoints as per the policies, style, formats, and reasoning patterns of your generative AI models.

Gen AI generally sees two kinds of fine-tuning:

End-to-end Fine-Tuning: It’s best for deep domain shifts that update all weights. However, full fine-tuning has high upfront costs and longer implementation timelines.

Parameter-Efficient Tuning (PEFT): This is the easy-to-version model that uses LoRA and adapters to update small subsets of parameters. However, it's easier and faster.

Where is Fine-Tuning Typically Used?

Now that you know what fine-tuning in Gen AI is, let’s learn about its best use cases that delivered substantial business value to adopters:

- Consistent Brand Voice & Format: Fine-tuning helps maintain brand coherence and structurally identical collaterals – from reports to emails. It hard-codes organizational tone, terminologies, and formatting needs into the model weight.

- Policy Enforcement & Task Execution: On the enterprise scale, fine-tuning is also used by businesses in applying internal rules, compliance logic and workflows reliably, without depending entirely on prompts. However, fine-tuning works wonders for deterministic, repeatable tasks where accuracy is crucial.

- Latency-Sensitive Tasks: Fine-tuned models deliver sub-second response times – the best for high-volume classification, routing engines, embedded systems, or edge deployments. They operate without retrieval calls to deliver outcomes where milliseconds matter.

- Narrow Domain Expertise with Low Content Changes: Fine-tuning works best when the underlying logic (legal and medical terminologies, compliance requirements, and engineering workflows) remains relatively static. This helps enterprises avoid retraining expenses.

Benefits of Fine‑Tuning for Gen AI

I’d say fine-tuning for Gen AI brings in solid stability for high-stakes landscapes. Adopters generally experience these fine-tuning advantages:

Optimizes Model Behavior: Fine-tuning reshapes how the model thinks – from integrating brand-aligned tone to step-by-step reasoning and formatting patterns into the model weight. It gives AI models the due behavioral precision that RAG architectures cannot consistently ensure.

Lesser Runtime Latency: Because retrieval processes aren’t part of the fine-tuned models, they bring blazing-fast inference, high throughput and cost-effective scaling. This speed and flexibility make them ideal for batch processing, agentic automation and edge LLM experiences.

Task Specialization: Fine-tuning works best for specialized tasks like code generation, medical coding, multi-step reasoning, legal clause extraction, etc., where the model is trained to follow task-definite logic with accuracy.

Challenges of Fine‑Tuning in GenAI

Irrespective of its multiple advantages, fine-tuning models also introduces the following limitations:

High Cost of Training & Retraining: Fine-tuning needs deep data preparation, GPU-intensive training cycles, evaluations, versioning, and safety protocols. Such upfront and ongoing costs make fine-tuning costlier than RAG.

Limited Support for Frequently Changing Data: Fine-tuned models typically internalize their knowledge. This is why they become outdated for quickly evolving domains. For environments where rules, facts, and documents change dynamically, RAG works better than fine-tuning.

Risk of Overfitting to Narrow Datasets: For small, biased, and inconsistent training datasets, fine-tuned models often ‘lock in’ issues. From hallucinations to brittle behaviour and incorrect generalization, actions that seem confident but deliver wrong outputs.

Data Preparation & Quality Complexity: Fine-tuning needs perfectly structured datasets, style guides, consistent labels, and high-impact AI use cases. Ambiguity in training data gets integrated into the model weight and becomes difficult to debug later.

Scalability & Maintenance Challenges: Enterprise generative AI models require various fine-tuned models serving diverse teams, tasks and workflows. These demand their own governance protocols, testing requirements, and advanced model lifecycle management leading to enhanced maintenance costs and infrastructural overheads.

Limited Transparency & Debugging: Model behaviour is encoded inside model weights, which makes it difficult to assess why a model behaves in a certain way. Fine-tuning doesn’t involve any native citations, which makes risk management and compliance audits difficult.

Difficulty in Controlling Hallucinations: Fine-tuning corrects formatting, tone, and reasoning but has no hold over factual accuracy. It has no grounding like RAG, so fine-tuned models often continue to hallucinate outcomes consistently.

RAG vs Fine-Tuning: What Core Differences Must Enterprises Understand?

Our AI stats report points out that businesses which leverage Gen AI witness up to 3,7x increase in ROI. This is where the architectural decision becomes crucial. We want you to make informed choices, so here’s a quick view table to understand the crucial differences:

| Metrics | RAG | Fine‑Tuning |

|---|---|---|

| Knowledge Access vs Model Behaviour | Adds external knowledge at query; knowledge remains outside the model | Changes model behaviour, internalizes patterns; knowledge stays frozen until retrained |

| Data Freshness | Near‑real‑time via re‑indexing | Static between retrains |

| Cost Model | Low upfront (no training); ongoing retrieval + storage | Higher upfront (training + data prep); lower per‑token inference later |

| Latency & Throughput | Retrieval overhead (1–3s typical; can be optimized) | Sub‑second with optimized inference; no retrieval |

| Accuracy & Hallucinations | Better factual grounding with citations; still needs evaluations | Better format/style adherence; hallucinations persist on facts |

| Portability & Vendor Lock‑in | Portable across LLMs; swap generators; keep data layer stable | Model weights tied to vendor/tooling; migrations require re‑tuning |

| Governance, Auditability, Compliance | Stronger provenance, data stays external; easier audits | Harder knowledge in weights, fewer native citations |

| Enterprise Use‑Case Alignment | Knowledge assistants, compliance Q&A, enterprise search, support | Structured drafting, routing/classification, policy‑bound outputs |

Now that we’ve discussed the differences between RAG and fine-tuning, let’s discuss the use cases where they work best.

Make the Right Enterprise Choice - When to Use RAG, Fine‑Tuning, or Both Together

When Does RAG Work Best?

RAG can be used when your knowledge base is large, changes frequently and needs to show provenance (citations).

- Your Knowledge Base is Massive and Dynamic

RAG is ideal for business environments where documents, policies, pricing, product specifications, or compliance rules change frequently and need to be reflected instantly in Gen AI outputs. - Accuracy, Freshness, and Auditability are Non‑Negotiable

RAG pulls out the latest information at every query, ensuring outcomes reflect present truth, not outdated model weights. - Your Processes Need Transparent Citations

RAG is best for regulated industries where every generative AI response must show information origins and support compliance reviews. - You Have to Minimize Hallucinations with Factual Grounding

RAG helps retrieve authoritative internal documents that support a model’s dependence on probabilistic guesswork. - You Want Faster Time‑to‑Production and Lower Upfront Cost

RAG models do not involve any training/re-training cycles; they are reliant on content ingestion and vector indexing, which leads to lower TCO and faster time-to-market. - You Want Stricter Governance

RAG architectures keep private/sensitive data outside the model weight, enabling transparent access controls, redaction logic, and policy guardrails at the retrieval layer.

This is what it can look like in real life:

Our Australia-based legal services provider client reviews complex contracts, compliances, policies, clauses, and documents daily as part of the job. However, their business process suffered and slowed down because of the constant manual efforts that went into retrieving, analyzing and retaining information.

That’s when the client opted to build a RAG-based Q&A system hosted on Azure-cloud for legal search and document analysis. With this system, the client achieved 97% query interpretation accuracy, 40% lower infrastructural costs, and managed over 500 concurrent queries swiftly in the testing phase. The detailed story is here.

When Does Fine-Tuning Deliver Results?

Fine‑Tuning is your best bet when your business process needs steady policy enforcement, specialized behaviour, or has low latency needs.

- You Need Behavioral Consistency

Fine‑tuned architecture hard-codes policies, tone, structure, and reasoning patterns right into model weights for predictable, reliable, and repeatable outputs. - You Have Stable and Slow-Changing Domain Knowledge

Fine-tuning delivers ideal value when the core content (medical, legal, financial, internal processes, etc.) doesn’t require frequent updates. - You Need Low‑Latency Inference with No Retrieval Overhead

Fine-tuning works best for high‑volume routing, classification, scoring, and other latency‑critical workloads. - Your Operational Tasks Require Strict Formatting or Rule‑Based Execution

Fine‑tuned models are known for delivering structured outputs, standardized templates, and high‑precision task workflows. - You Need Alignment with Brand Voice or Expert Domain Style

Fine‑tuning ensures every output from your gen AI model aligns with the brand’s personality, technical depth, and compliance-driven communication style. - You Have Access to High‑Quality Labelled Data

Fine‑tuning delivers the best value when training data is clean, consistent, and representative of the intended behaviour.

When to Use the RAG + Fine-Tuning (Hybrid) Approach?

Leverage RAG+Fine-Tuning (Hybrid) when your business process needs consistent model behaviour + fresh, verified data.

- You Want Best of Both: Behaviour + Knowledge

Use fine‑tuning for reasoning, style, policy; use RAG for live, source‑grounded facts—in 2026 and beyond, this is going to be the dominant enterprise model. - Your Model Mixes Stable Reasoning and Dynamic Knowledge

Say you are building a financial advisory bot. Fine-tuning would fix its advisory logic while RAG would uphold the latest market insights. - You Need Production‑Grade Architecture

RAG manages data freshness and compliance, while fine‑tuning handles task execution and formatting consistency. - You Want a Robust Architecture Pattern:

- RAG → Knowledge Plane (retrieval, citations, compliance)

- Fine‑Tuning (PEFT) → Behavioural Engine (logic, tone, structured output)

RAG’s grounding reduces model hallucinations, while fine-tuned behaviour reduces model drift. When combined, they deliver stable, accurate, and usable enterprise outputs.

- You Support Multi‑Step Agents or Workflow Automation

AI agent development needs constant reasoning (fine-tuning) and real-time factual insights (RAG) to prompt accurate decisions and execute actions, and that’s what a hybrid approach delivers.

What’s Your Pick for 2026 and Beyond?

RAG vs fine-tuning isn’t an architectural decision if you look at it closely. It’s largely about how generative AI is operationalized beyond the prototype stage into the enterprise use-case scale.While RAG delivers your Gen AI outputs in fresh, citable insights for compliance and trust, fine‑tuning locks and amplifies compliance‑aligned behaviour, style, and speed. However, the path that delivers true value for modern enterprises is hybrid – here, RAG acts as the knowledge plane, and fine‑tuning as the behaviour engine. Our experts have converged the best of RAG and fine-tuning to smash hallucinations, enhance time-to-value and future-proof evolving tech stacks against data, model and vendor changes.If you’re ready to move your concepts from pilots to production with RAG, fine-tuning or an hybrid approach, partner with Radixweb to integrate robust pipelines, and operationalize measurable ROI across your generative AI landscape— with speed, security and reliability.

Frequently Asked Questions

When should businesses use RAG instead of fine‑tuning?

Is RAG better than fine‑tuning for generative AI applications?

Which approach is more cost‑effective: RAG or fine‑tuning?

Can RAG and fine‑tuning be used together in one AI system?

What is the biggest mistake enterprises make when choosing between RAG and fine‑tuning?

What is the difference between RAG and fine‑tuning in LLMs?

Ready to brush up on something new? We've got more to read right this way.