Read More

Outstanding IT Software at the 2026 TITAN Business Awards - Read More

ON THIS PAGE

What’s At Stake: Machine-learning models now shape pricing, approvals, forecasts, and risk decisions at scale, often faster than organizations can govern them. Below I walk you through how enterprises quietly lose control of decision-making through successful models, and why ML governance and model risk management are essential to preserving accountability, intent, and long-term control.

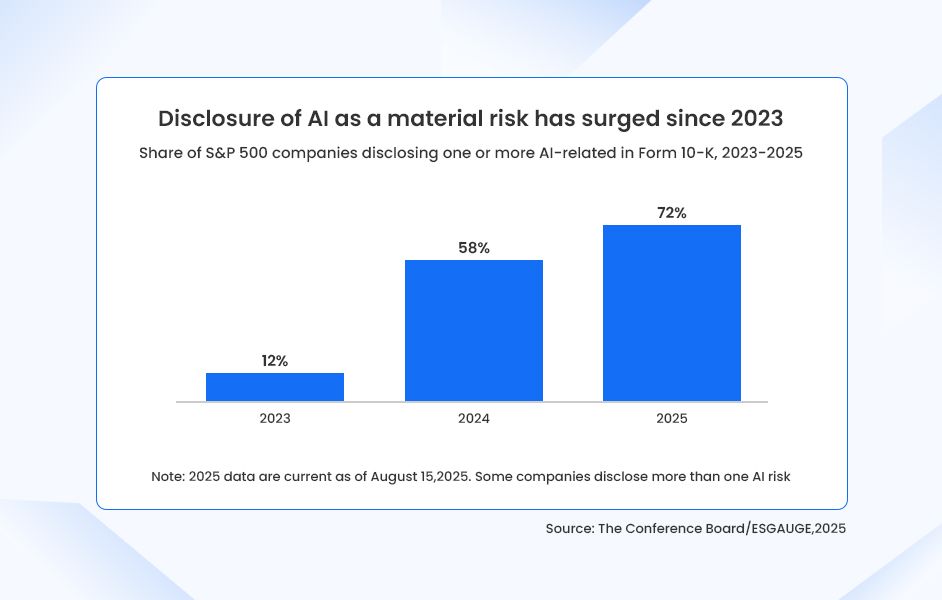

In 2025, almost 3 out of 4 S&P 500 companies (72%) disclosed AI as a material risk in their annual filings. That’s up from just 12% in 2023.

This six-fold surge is not about hype. It reflects a growing recognition that AI and ML systems are now strategic operational risks, capable of affecting earnings, regulatory posture, and corporate reputation.

Why does this shift matter? Because public disclosures are conservative by design. Companies do not add new risk language lightly. They do not do it because something might go wrong. They do it when something already has, or when leadership can clearly see that it will.

That observation is not speculative. A recent industry study found that 95% of enterprises have experienced at least one AI-related mishap. More concerning, however, is that only about 2% of enterprises meet even basic standards for responsible governance and risk control.

This gap—between lived experience and organizational readiness—is where it stops being a technical challenge and becomes an enterprise risk.

While AI governance is often discussed as a broad enterprise concern, this discussion focuses specifically on ML governance, which is the discipline responsible for governing decision-making models that directly influence business outcomes.

The Risks Enterprises Already Recognize

AI investments are expected to increase by over 300% in the coming years. And at this point, most enterprises have reached a certain level of tech maturity that they agree on the surface-level risks of AI and machine learning. These include:

- Financial exposure is usually the first to surface

When ML models drive pricing, credit decisions, fraud detection, or demand forecasting, small errors scale quickly. Unlike traditional systems, these failures do not always announce themselves. They compound quietly, quarter after quarter, until someone notices the impact on margins or revenue performance.

- Regulatory and legal risk follows closely behind

This is one of the most discussed risks in our MLOps consulting sessions. Regulators are no longer satisfied with after-the-fact explanations or vague assurances about controls. They want to know why a model made a decision, how it was validated, who approved it, and whether it behaves consistently across different populations and conditions. Without model risk management, organizations struggle to answer these questions in a defensible way.

- Customer trust is the third pillar of concern

ML-driven decisions feel final to customers in a way human decisions never did. When outcomes are inconsistent, biased, or impossible to explain, trust erodes quickly. And once trust is gone, no amount of technical explanation brings it back.

These risks alone justify serious investment in ML governance. And they are now widely understood.

The Risk That Rarely Gets Disclosed

And yet, none of these is the most dangerous risk enterprises face.

The more consequential threat is the gradual loss of institutional control over decision-making itself.

Every ML model encodes judgment. It reflects assumptions about risk tolerance, growth priorities, acceptable trade-offs, and business intent. Traditionally, these judgments lived in policies, leadership decisions, and experienced teams. With machine learning development, they increasingly live inside models. Models that are retrained, reused, fine-tuned, and redeployed faster than organizations can realistically track.

This is how enterprises slowly lose control of their own intelligence.

It rarely happens through negligence. More often, it happens through success. A model performs well, so it gets reused. Another team adapts it for a new use case. Features are added to improve accuracy. Thresholds shift as data changes. Over time, the original intent behind the model fades, even as dependence on its outputs grows.

Eventually, leaders begin reacting to model outcomes without fully understanding the reasoning behind them. Strategy starts flowing from the system rather than into it. At that point, the organization is no longer using AI/ML to execute its decisions, it is allowing it to define them.

This is not a technical failure. It is a governance failure.

ML Governance as a Mechanism for Preserving Intent

ML governance is often misunderstood as bureaucracy or process overhead. In practice, its primary purpose is to preserve intent at a scale.

Good governance forces clarity. It requires organizations to explicitly define what decisions a model is allowed to influence, under what conditions it should be trusted, and when human judgment must intervene. It establishes ownership so that models do not exist in a vacuum, disconnected from accountability.

Model risk management complements this by ensuring that models are not just accurate, but appropriate. Accuracy alone does not guarantee alignment with business goals, risk appetite, or ethical standards. Model risk practices introduce validation, monitoring, and challenge mechanisms that keep models grounded in real-world behavior rather than theoretical performance.

Together, ML governance and model risk management ensure that intelligence remains institutional rather than accidental. They make sure that as AI systems evolve, organizations retain the ability to explain, defend, and ultimately own the decisions being made.

What This Looks Like Inside Enterprises

This loss of control is not theoretical. We see it consistently in organizations that have reached a certain level of AI maturity.

At Radixweb, we have seen enterprises often reach a clear inflection point. They have invested heavily in data, AI and machine learning capabilities, deployed multiple models across business functions, and achieved measurable gains. But when leaders start asking deeper questions (about consistency, accountability, or long-term control) the answers are often unclear.

That is usually when governance becomes a strategic priority. Not because something has already failed catastrophically, but because organizations recognize that scaling intelligence without structure eventually leads to fragility.

That’s why we make a priority to help the organizations we work with embed ML governance and model risk management directly into the lifecycle by defining ownership upfront, establishing approval and validation workflows, monitoring for drift and unintended behavior, and creating clear escalation paths when models operate outside defined intent.

The objective is not to slow innovation. It is to make innovation sustainable.

The Enduring Advantage of Governed IntelligenceOver the next decade, the enterprises that succeed will not be the ones with the most sophisticated models or the highest accuracy scores. They will be the ones that retain clarity over why their systems behave the way they do.ML governance is not about fear or compliance theater. It is about leadership. It is about ensuring that as machines become more capable, organizations do not abdicate responsibility for the decisions that define them.The rise in AI risk disclosures tells us one thing clearly: enterprises are no longer asking whether AI can cause harm. They are asking whether they are prepared to manage it.ML governance and model risk management are how that preparedness becomes real, not just on paper, but in practice.

Ready to brush up on something new? We've got more to read right this way.