Read More

Outstanding IT Software at the 2026 TITAN Business Awards - Read More

ON THIS PAGE

Where QA Is Headed in 2026: Quality assurance and software testing are being rewritten in real time. This blog unpacks the latest trends in software quality assurance that are redefining how teams test, validate, and protect modern software. You’ll learn what’s changing, why traditional approaches are falling behind, and how today’s QA practices help teams ship faster, safer, and more reliable software in an increasingly complex development landscape.

The global software quality assurance market is valued at $47.3 billion in 2026. By 2035, the market will exceed $73 billion in valuation. Other data and reports on QA and testing suggest that quality assurance now accounts for about 40% of the total development budget.

Yet, 95% of organizations are still shipping software with bugs.

Today, quality assurance is not failing because teams lack tools or talent. It’s failing because software itself has outgrown the old QA model. Release cycles have shrunk from months to days. Applications now depend on dozens of APIs. A single UI change can quietly break critical user flows.

Traditional QA, which treats testing as a late-stage activity, doesn’t work anymore.

What’s emerging instead is a shift from testing software to engineering confidence. Modern QA is becoming continuous, automated, and deeply embedded across the lifecycle. AI-driven test creation, self-healing automation, production monitoring, and cloud-scale test environments are not isolated quality assurance trends.

They are responses to a single problem: how to move fast without losing trust in every release.

This blog explores the latest trends in software testing and QA that are shaping that shift. We further explain why these software quality assurance trends matter and how forward-looking teams are redefining quality to keep pace with the quality assurance updates.

Why These Quality Assurance Trends Matter Now

The software quality assurance market is being reshaped by forces that go far beyond testing tools or methodologies.

Enterprises today are operating in an environment defined by accelerated release cycles, native cloud computing architectures, AI-driven functionality, heightened security threats, and stricter regulatory oversight. At the same time, user expectations for reliability, performance, and experience have never been higher.

These shifts are converging to expose the limits of traditional testing models. Manual-heavy, late-stage, and siloed approaches simply cannot keep pace.

Also Read: Signs That Your Software QA Process Needs An Audit

What we are seeing globally is not a gradual evolution. It is a structural change in how quality is engineered, measured, and sustained. Testing is moving closer to development, deeper into production, and increasingly supported by intelligent automation solutions for business workflows and real-time feedback. The recent trends in quality assurance shaping 2026 are direct responses to this reality.

Several macro forces are driving this inflection point:

- Release velocity is accelerating: Agile, DevOps, and CI/CD pipelines demand faster validation without compromising quality.

- Architectural complexity is increasing: Microservices, APIs, and distributed systems require continuous and contract-based testing.

- AI adoption is rising: Intelligent systems need intelligent testing to validate behavior, data integrity, and outcomes.

- Security and compliance pressure is intensifying: Breaches and regulatory violations now carry significant financial and reputational risk.

- User experience is a differentiator: Visual, performance, and reliability issues directly impact conversion and brand trust.

Ignoring these forces widens the gap between delivery speed and software quality. The latest technology trends in quality assurance discussed in this blog are not speculative ideas or experimental practices. They represent proven responses that leading organizations are already adopting to remain competitive.

For enterprises planning beyond 2026, understanding and acting on these software quality assurance market trends is no longer optional. It is foundational to building resilient, scalable, and future-ready digital products.

10 Latest Technology Trends in Quality Assurance and Software Testing

The following latest technology trends in quality assurance reflect how QA is evolving in response to real-world engineering, compliance, and user experience demands. These are not experimental ideas, but tangible shifts shaping how leading organizations are building, testing, and scaling software.

1. AI-Generated Test Scripts

Automation Without Manual Scripting Overhead

AI-generated test scripts are rapidly moving from experimental to mainstream in global QA practices. In fact, 40% of QA teams have already integrated AI tools into their testing processes.

Rather than manually authoring and maintaining every script, GenAI models analyze requirements, application flows, and historical data to produce automation that evolves as the code does. This shift matters because it directly addresses the two biggest pain points in traditional automation:

- Maintenance cost and

- Slow ramp-up times

As agile delivery and continuous integration become ubiquitous, leveraging AI for script creation isn’t just a nice-to-have. Instead, that’s one of the upcoming trends in software testing that enables teams to keep pace with release velocity while broadening coverage with fewer resources.

2. Test Automation in Production

Testing Where Real Failures Happen

As software systems grow more distributed and release cycles shorten, the industry is accepting a hard truth:

“Pre-production testing alone cannot guarantee reliability”

Automated checks running directly in production (covering all key software quality metrics and KPIs, user journeys, availability, latency, and data integrity) are becoming standard practice in mature digital organizations. But this latest trend in QA testing is not about reckless testing in live systems. It is about controlled, read-only validations and synthetic transactions that continuously confirm system health.

Companies adopting production automation gain early visibility into failures that staging environments never surface. Those avoiding it rely on assumptions, discovering defects only after customers do, when the cost and impact are highest.

3. Cloud-Based Test Environments & Labs

Testing That Scales With Demand

As software delivery scales globally, fixed on-premise test environments are increasingly becoming a constraint rather than an asset. Cloud-based test environments allow teams to spin up production-like infrastructure on demand, test at scale, and tear everything down once validation is complete. This latest trend in quality assurance is accelerating because modern QA must support parallel testing, geographically distributed teams, and rapid CI/CD pipelines without waiting for environment availability.

In fact, the global cloud testing market is projected to grow to $29 billion in 2030 at a 13% CAGR between 2025-2030.

Organizations adopting cloud test labs gain flexibility, consistency, and the ability to simulate real-world load and configuration scenarios. Those that delay adoption often face environment contention, inconsistent results, and slower release cycles that directly impact delivery confidence.

4. Autonomous Self-Healing UI Tests

Automation That Fixes Itself

Maintaining UI automation has long been one of the most labor-intensive parts of QA. Small UI changes break scripts, and teams spend more time fixing tests than innovating.

Autonomous self-healing UI frameworks are the latest technology in software testing changing that. These tools use machine learning to detect element changes, adapt selectors, and keep tests stable without constant manual updates. Unsurprisingly, 43% of testing teams in a survey had already started using self-healing tests. Now, the reason why this trend is gaining traction is because it directly addresses the fragility problem that slows automation velocity in large, rapidly changing applications.

As organizations scale testing across teams and platforms, self-healing reduces flakiness, improves reliability, and frees QA engineers for higher-value work.

5. API Contract Validation Testing

Keeping Microservices From Breaking

Modern software systems no longer fail because of isolated bugs. They fail because independently deployed services stop agreeing with each other. Based on 2 billion checks, API downtime rose 60% between Q1 2024 and Q1 2025.

As microservices, third-party integrations, and partner APIs proliferate, API contract validation has become a critical quality control mechanism. Instead of waiting for end-to-end tests to catch failures, teams now validate schemas, payloads, and expectations at the contract level. All before services are deployed. This new trend in software testing is accelerating because it scales with architecture complexity and reduces late-stage integration surprises.

Organizations adopting contract testing as a part of regular integration testing stabilize releases and decouple team dependencies. Those that avoid it often discover breaking changes only in production, where recovery is slow and costly.

6. Synthetic Test Data Generation

Testing Without Touching Real Data

Access to production-like data has become one of the biggest blockers to effective testing. Privacy regulations, security risks, and data ownership constraints increasingly prevent teams from using real user data in non-production environments.

Synthetic test data generation (creating statistically realistic, privacy-safe datasets that mirror production behavior without exposing sensitive information) is emerging as a practical solution here.

The fact that the global synthetic data market is projected to grow to $2.1 billion in 2028 corroborates the trend.

This latest trend in software quality assurance is accelerating because compliance requirements are tightening globally while test coverage expectations continue to rise. Organizations adopting synthetic data unblock testing earlier in the lifecycle and reduce compliance risk. Those that don’t often face stalled test cycles, limited scenario coverage, or regulatory exposure.

7. AI-Powered Visual Regression Testing

Seeing What Traditional Tests Miss

Functional correctness alone isn’t enough in a world where visual experience defines user satisfaction. Minor layout shifts, rendering differences across browsers, or device-specific UI alterations can erode trust, conversion, and engagement even when core functionality works.

Regression testing strategies, which are now being powered by AI, move beyond pixel comparisons to understand perceptual differences. It flags only meaningful visual deviations and thus, reduces false positives. This latest trend in software testing has gained traction because cross-platform complexity and design velocity have outpaced traditional image-diff tooling. Organizations adopting these solutions catch UI and UX regressions earlier, align closer with user expectations, and reduce costly post-release hotfixes driven by visual bugs.

8. Continuous Performance & Load Testing

Performance Isn’t an Afterthought Anymore

In traditional test management, organizations used to bolt on performance testing at the end of development cycles. But today’s digital experiences run under unpredictable loads (like global releases, peak traffic events, and microservices interdependencies) which makes isolated pre-release performance runs insufficient. Continuous performance and load testing integrates stress, endurance, and throughput validation into the delivery pipeline itself, allowing teams to see performance impact with every change.

In practice, this current trend in software testing is being driven by SRE and DevOps teams that can no longer afford surprises in production. It turns performance from a gate-check into a continuous quality signal that informs capacity planning and architecture trade-offs.

9. Automated Security & Compliance Testing

Security at the Speed of Delivery

Security threats and regulatory demands aren’t slowing down. Yet delivery velocity is accelerating. The consequence? Organizations can no longer treat security as a late-stage checkbox. Quality assurance teams have to embed security and compliance checks early and continuously. Automated security and compliance testing integrates:

- Vulnerability scanning

- SAST/DAST

- Dependency checks

- Policy validation

All within CI/CD pipelines. This integration of QA in CI/CD pipeline often referred to as QAOps, enables teams to shift left without slowing down releases. This software quality assurance trend is gaining traction because the cost of breaches and compliance violations continues to rise sharply, making proactive, automated safeguards a business imperative. Teams that wait to adopt these practices expose themselves to preventable risk that grows with scale.

10. Low-Code / No-Code Testing Platforms

When Testing Scales Beyond QA

The global low-code market is poised to reach $187 billion by 2030. But it is not just low-code development that is gaining ground. As delivery velocity increases, traditional QA teams alone can no longer keep up with testing demand. Low-code and no-code testing platforms are also gaining momentum because they remove a structural bottleneck: Reliance on scarce automation specialists.

These platforms enable product owners, business analysts, and software and QA testers to create and maintain automated tests using visual workflows and natural language constructs. The trend is accelerating globally as enterprises look to expand test coverage without proportionally increasing headcount. Organizations adopting low-code testing distribute quality ownership across teams and release faster with confidence. Those that resist this shift often struggle with backlogs, delayed releases, and under-tested critical flows.

With that, it is a wrap on our list of the latest technology trends in quality assurance. By strategically adopting the latest technology in software testing, modern organizations can test smarter and ship better. But implementing these trends doesn’t happen without challenges.

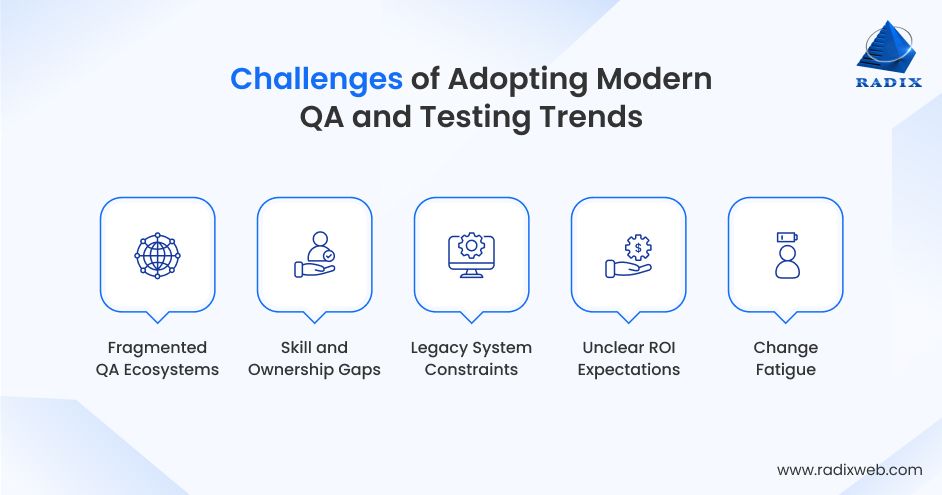

Common Challenges in Implementing Modern QA and Testing Trends

Adopting modern QA and software testing trends is rarely a technology problem alone. For most enterprises, the real challenge lies in translating intent into execution. All without disrupting delivery, inflating costs, or introducing new risks. While the benefits of these trends are widely understood, implementation often exposes organizational, operational, and cultural friction that slows progress or leads to stalled initiatives.

A common pattern we see across industries is over-indexing on tools while underestimating the effort required to realign processes, skills, and ownership models.

- Automation frameworks are introduced without clear quality metrics.

- AI-driven tools are deployed without the data maturity to support them.

- Performance and security testing are added, but too late to influence architectural decisions.

These gaps don’t reflect a lack of ambition, but the complexity of change at scale and across the software testing lifecycle. Some of the most persistent challenges include:

- Fragmented QA ecosystems: Multiple tools and teams operating in silos reduce visibility and dilute accountability.

- Skill and ownership gaps: Advanced testing approaches demand cross-functional collaboration that many teams are not structured for.

- Legacy system constraints: Older architectures resist continuous and shift-left testing models.

- Unclear ROI expectations: Without measurable outcomes, initiatives lose executive support over time.

- Change fatigue: Teams already under delivery pressure struggle to absorb new practices without guidance.

The cost of navigating these challenges alone is often invisible at first. Missed release windows, quality regressions in production, and reactive firefighting gradually become normalized.

But organizations that succeed tend to do one thing differently: they treat QA transformation as a strategic initiative, not a tooling upgrade.

Working with experienced software testers and QAs to adopt upcoming trends in software testing reduces risk and ensures that quality improvements translate into real business outcomes rather than short-lived experiments.

A Clear Path Forward for Quality in 2026Software testing solutions are moving towards a new direction. Quality is becoming continuous, intelligent, and deeply embedded across the software lifecycle. For organizations planning for 2026 and beyond, the way forward is not about chasing every new tool. It is about making deliberate, well-informed choices that align testing with business outcomes, user expectations, and long-term scalability. The recent trends in quality assurance outlined in this blog offer a practical blueprint. With these, enterprises can move from reactive quality assurance to proactive quality engineering.At Radixweb, we help organizations turn this blueprint into execution. With over 25 years of hands-on experience, we bring deep domain expertise, proven implementation frameworks, and real-world success across industries and technologies. Our teams work closely with enterprises to adopt the right testing strategies, integrate them seamlessly into existing ecosystems, and deliver measurable results. As software continues to evolve, having the right partner makes all the difference in realizing the full value of these quality assurance trends. Schedule a no-cost consultation with our QAs and software testers to map out trend adoption, without disruption, and with tangible results.

Frequently Asked Questions

Why is test automation becoming essential for enterprises?

What are the latest quality assurance trends in software development?

What are the latest software testing trends in software development?

What is the future of software testing?

How can enterprises implement these QA and testing trends effectively?

Ready to brush up on something new? We've got more to read right this way.