Read More

Outstanding IT Software at the 2026 TITAN Business Awards - Read More

ON THIS PAGE

Where Strategy Meets Meaning: Data annotation, when done right, is one of the most strategic levers of AI adoption. I say that from my experience of having led the data annotation efforts at Radixweb from the business side. What you see below is my attempt to explain how LLMs change the stakes, where annotation decisions go wrong, and what business leaders must do to guide interpretation, judgement, and outcomes.

For most business leaders, data annotation sits in the background, disconnected from broad leadership conversations. It sounds technical, operational, and far removed from the real work of running a business. Even in organizations that talk seriously about AI, annotation is often treated as a prerequisite rather than a lever. It is something to get through so the ‘real’ intelligence can begin.

This view made sense when annotation was slow, manual, and limited in scope. It makes far less sense in a world where large language models (LLMs) can interpret, summarize, and classify human language at scale.

Now, what has changed is not the activity itself, but its impact. Annotation is no longer just about preparing data. It is about deciding how your organization understands customers, markets, and internal signals. And doing so repeatedly, consistently, and at speed.

This is why annotation has become a strategic concern, even if it rarely shows up that way in data strategy consulting or on executive agendas.

Why Annotation Deserves Leadership Attention

We live in a data surplus world. Every organization today is drowning in unstructured information: emails, calls, chats, proposals, feedback, internal notes.... The promise of LLMs is that this information can finally be made usable. The risk, however, is that it will be made usable in the wrong way.

Annotation is where ambiguity is resolved. Someone (a human, a model, or a combination of both) decides what a piece of information represents. Is it interest of curiosity? Friction or noise? Early warning or background chatter?

Once those decisions are embedded in systems, they go on to shape dashboards, forecasts, prioritization, and ultimately behavior. Over time, they stop being questions and simply become “what the data says.”

When LLMs are used for data annotation, they amplify this effect.

They allow interpretation to scale far beyond what human teams could have managed on their own. But they do not inherently understand business consequences. They are optimized for coherence, not for what breaks when an interpretation is wrong.

That gap between plausibility and consequence is where executives should be paying attention.

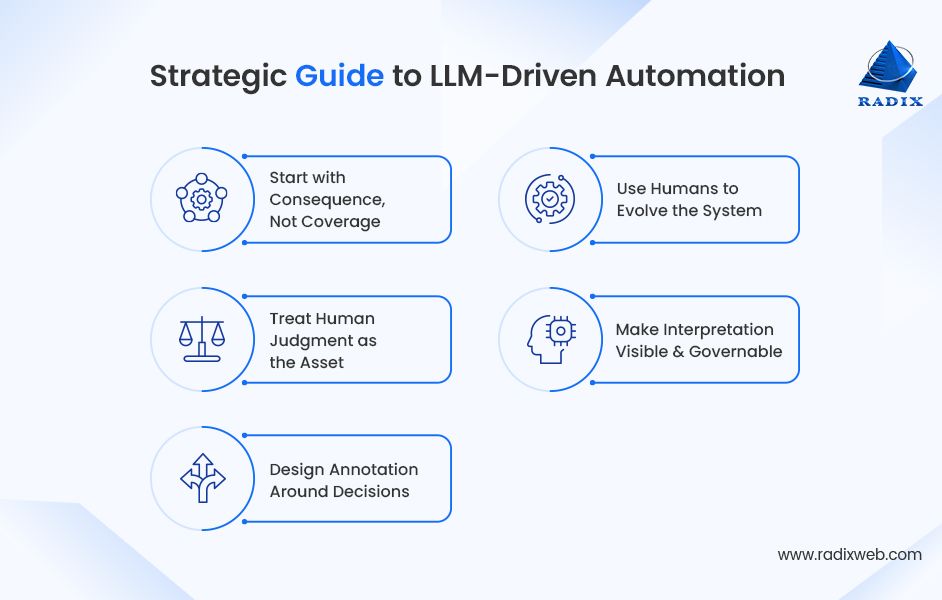

A Strategic Playbook for LLM-Driven Annotation

At Radixweb, we didn’t arrive at our understanding of LLMs for data annotation through theory. We arrived here through friction.

We had years of data, increasingly sophisticated tools, and substantial data engineering expertise. Yet we found ourselves debating what signals actually meant. The problem wasn’t a lack of intelligence. It was a lack of shared, explicit judgment.

What follows is the strategic approach that emerged from that experience... not as a template to copy, but as a way to think clearly about where LLMs help, where they don’t, and how to design annotation, so it supports real decisions.

1. Start With Consequence, Not Coverage

For decision makers, the instinct with LLMs is to start big: label everything, see everything, capture all signals. Strategically, that is the wrong starting point.

Why? Because the most important data in any organization is rarely the most common. It is the data, when misread, leads to outsizes consequences like missed deals, unexpected churn, misallocated effort, and false confidence. Those cases are often ambiguous, context-heavy, and uncomfortable to interpret.

So, before scaling annotation, identify where misunderstanding has historically hurt the business. Start there. If a system cannot handle the cases where judgment matters most, scaling it will only make mistakes quieter and harder to detect.

2. Treat Human Judgment as the Asset, Not the Bottleneck

One of the most common mistakes in data annotation strategy is treating human-in-the-loop as a cost that needs to be minimized. In reality, experienced human judgment is the scarcest most valuable input in the system.

The LLMs’ role should be to absorb and reproduce that judgement, not replace it prematurely. That means spending time upfront making implicit reasoning explicit: why experienced teams read certain systems the way they do, what they discount, and what outcomes they try to avoid.

If LLMs don’t mirror this reasoning, the failure is instructive. It shows where your own understanding is more contextual than you realized and where the system needs guidance instead of autonomy.

3. Design Annotation Around Decisions, Not Labels

Most executives don’t make decisions based on flat categories. They think in tradeoffs:

- Urgency vs. Readiness

- Opportunity vs. Risk

- Signal vs. Noise

Data annotation systems that collapse complexity into simple labels inevitably drift away from how leaders actually reason. Instead, strategic annotation should preserve the dimensions that influence action.

Sure, not every nuance needs to be encoded, but the ones that shape prioritization, timing, and confidence usually do. When annotation reflects decision logic rather than analytical convenience, downstream systems become allies rather than obstacles.

4. Use Humans to Evolve the System, Not Police It

Adding more ‘human review’ does not automatically improve outcomes. When people are asked to validate large volumes of machine output, they default to speed and agreement. With that, judgement quality erodes.

In our practical experiences, we’ve found greater value in focusing human involvement where interpretation was changing or where mistakes would be expensive. In this role, humans are not auditors but sense-makers. Their job isn’t to catch errors, but to surface new patterns and challenge existing assumptions.

This requires a shift in incentives and expectations. And, more importantly, a willingness to accept that not everything needs to be reviewed to be understood.

5. Make Interpretation Visible and Governable

One of the biggest and yet most underestimated risks of LLM-assisted data annotation is opacity. That’s a challenge that most organizations attempt to address through data modernization, such as improved governance and traceability.

When models are used informally to clean, summarize, or classify data, layers of interpretation creep into systems, without ownership or traceability. Over time, this creates strategic blind spots. Leaders react to outputs they can no longer explain or fully trust.

The solution isn’t restriction, but transparency. Interpretation should be visible, versioned, and open to challenge. Not because perfection is achievable, but because alignment requires shared understanding of how meaning is being created.

From Back-Office Task to Executive MandateI have led Radixweb’s internal data annotation efforts from the business side. I have seen firsthand how quickly annotation moves from “background task” to strategic differentiator. The real shift wasn’t adopting LLMs. It was taking ownership of how meaning gets defined and reused across the organization.So, my advice to business leaders is: Don’t delegate interpretation. Get involved in deciding which signals matter, where mistakes are costly, and how judgment is encoded. Annotation is no longer an implementation detail. It’s a leadership responsibility. And those who treat it that way will build AI systems they can actually trust.

Ready to brush up on something new? We've got more to read right this way.